Build your faith belief foundation

Build your faith belief foundation

Are you looking for answers to life’s most pressing questions?

I’ll help you understand the Bible, while making sense of our mixed up world.

Understand the Bible

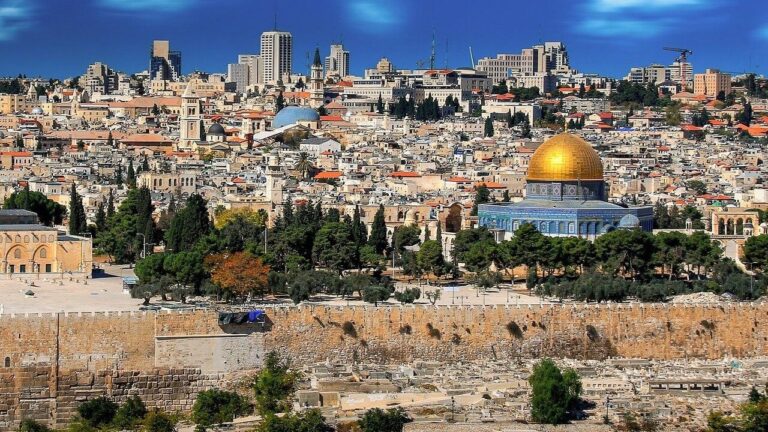

Luke 21: Jerusalem Surrounded By Armies

Jesus warned us, when armies compass Jerusalem, those in Judea should flee. We typically envision armies of men, but is that what Jesus was referring to?

Luke 21: Jerusalem Surrounded By Armies

Jesus warned us, when armies compass Jerusalem, those in Judea should flee. We typically envision armies of men, but is that what Jesus was referring to?

Ask a question

What Does The Bible Say About Suicide?

The Bible provides several examples of suicide. It also tells us about honorable men that wanted to die, but God strengthened them, and helped them endure.

How Did Judas Die?

Did Judas hang himself or fall, and who purchased the potters field, Judas or the priests? Do these subjects contradict? Let’s open the Bible to clear it up.

Where Do We Go When We Die?

We are all born, and we all pass away, but where do we go when we die? The Bible makes it very clear, we instantly return to God who created our spirit.

Make sense of the news

Christian commentary

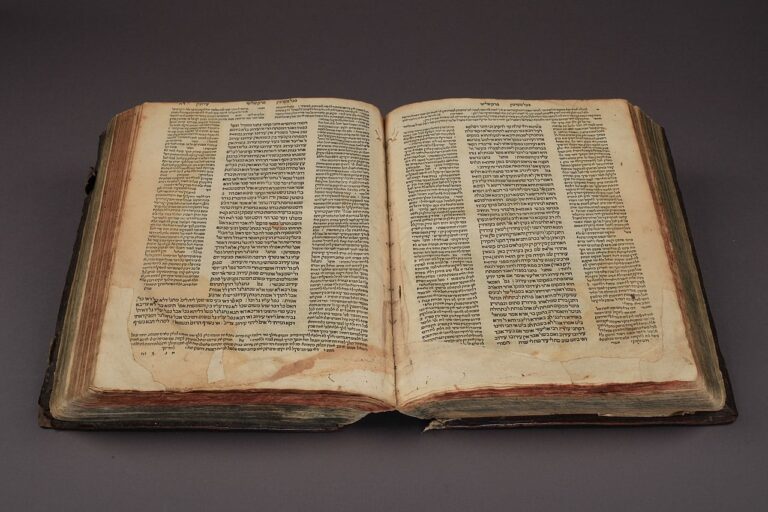

Talmud: The Dangers Of Judaism

We commonly hear, the Jews are God’s chosen people, and Judeo-Christian values bind us together. So what does the Talmud say about the values of Judaism?

A Personal Story Of Patience

I have a personal story for you today. I originally planned to post another article I wrote this morning titled, “People See Who Jesus Is By Looking At Our Lives”. I proofread that one yesterday

God Is Amazing: Look At This Awesome Universe Size Comparison

More often than not these days, we are told God does not exist, that He is a figment of our imagination. Yet, when we observe the universe, we can see God does exist by looking